When running an engagement survey we want to get the response rate as high as possible. There are many reasons for this:

We want to maximise the number of employees who get to voice their opinions, and

We want to maximise the accuracy of the data, to help teams and organisations to take action on employee feedback.

Unless you get a 100% response rate (i.e. all employees complete the survey), you may still wonder what the results would have looked like if everyone had responded. How much could the results have shifted higher or lower? The margin of error (MOE) statistic helps answer this question.

The margin of error estimates the “confidence interval” around the survey results, as it indicates how much the results could vary if everyone in an organisation or team had responded. The size of the margin of error depends on both the response rate, and the size of the group (headcount) being surveyed.

A higher response rate means the results are more representative of the whole group. The following table shows how a better response rate reduces the margin of error, and leads to in more reliable (accurate) results.

Generally the smaller the group, the larger the response rate that is required for an accurate result. The effect of different group sizes is shown in the following table. Even though the response rate is the same for all teams, the margin of error is largest for the small team.

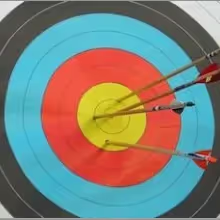

The following examples shows how to apply Margin of Error to a set of survey results.

Division A had a 90% response rate from a headcount of 100 and a margin of error of 3.0%. This means the results from each question can be considered accurate within a range of plus or minus 3.0%. Applying this at a question level, if the % favourable for “Our policies and procedures are efficient and well-designed” was 33%, the margin of error would be 33% +/-3.0%. In other words, we could say that if all the staff from that work area responded we could be confident that the score would only be a little higher or lower (between 30% and 36%). However, our interpretation of this result wouldn’t be very different – it is still a low result.

.avif)

Work Area B had a response rate of 20% from a headcount of 100 and a margin of error of 18.0%. This means the results from each question can be considered accurate within a range of plus or minus 18.0%. Applying this to the same question, if the % favourable for “Our policies and procedures are efficient and well-designed” was 33%, the margin of error would be 33% +/-18.0%. In other words, we could say that if all the staff from that work area responded then the score might be a quite different (between 15% and 51%). It is still likely to be a low result, but there’s a chance it might be as high as 51% (a more moderate result).

1. Always try to maximise your response rate while the survey is ‘live’, as a higher response rate results in a lower margin of error (i.e. increasing the accuracy of results).

2. If you have achieved a change in scores but it is smaller than the margin of error, we can’t be confident that the change is real.

3. f there is a high margin of error, you need to review the results more cautiously. Allowing for margin of error: